Formatting Security - extraction and preprocessing security data, and application of metrics to evaluate it

Even after the ‘capture’ performed by digital cameras, visual data is not given. Instead it is re-made, selected, preprocessed and formatted in order to be readable and usable to computer vision software. The majority of the ‘data’ captured is discarded or backgrounded in this process, while other parts are synthesised and augmented even before the (necessarily political) metrics that try to capture something about the social world are applied to it.

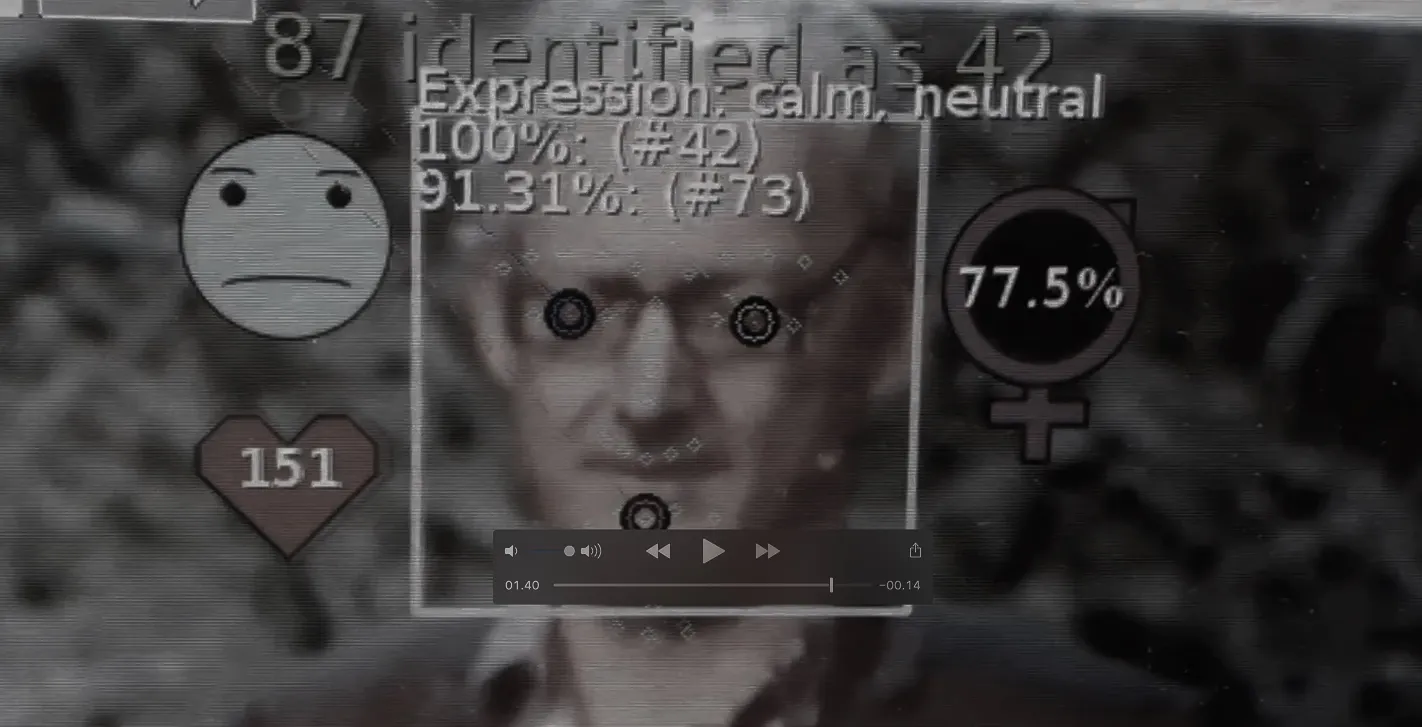

How are digital images turned into security knowledge, and what does this ‘turning into’ do to the subjects of (in)security? The video below uses the absurdity of a deliberate mismatch to highlight how computer vision ‘sees’. The categories and metrics will always be oblivious to the social situation they depict, and often absurd.

It’s clear in the video that the recognition system understands radically different properties of the social that what the average viewer would do. These properties are not wrong per se, just ill-suited to some understandings of the social situation, while suited to others.

Most often, security is treated visually by way of images being regarded as evidence of a world external to the digital imaging apparatus, as evidence of what happened somewhere. But technologies relying on computer vision exceed this simple equivalence, even if they also build on it. I am interested in the processes that take place between the recruitment of a digital image into such a system and the report of an output metric. Outputs can be a score evaluating a likely match against a database, a probabilistic identification of either commonalities in image composition (scene detection) or of the presence of some category of objects in the image (object detection), or some other metric. Being interested in these processes is difficult for a social scientist, but I think that these behind-the-scenes digital transformations are where a lot of the social changes coming with the digital society are located.

We’ve all seen that with how social media has transformed debate, in ways that were unforeseen also to its creators, and I think we’ll see changes on the same scale when our understanding of the social world is mediated by recognition technologies. I don’t know exactly how, but the short video above tries to make this gap apparent in its uncanny mismatch of contents. The video shows recognition technologies applied to my interview of activist academics Lucy Suchman and Toby Walsh, both involved in debating the role that AI and recognition technologies should play in war and weapons systems.

These texts think about technological forms of seeing and how they relate to security and violence.

Saugmann, R. (2018). The Art of Questioning Lethal Vision: Mosse’s Infra and Militarized Machine Vision. [Unpublished manuscript]

Saugmann, R., Möller, F., & Bellmer, R. (2020). Seeing like a surveillance agency? Sensor realism as aesthetic critique of visual data governance. Information, Communication & Society, 23(14), 1996–2013. https://doi.org/10.1080/1369118X.2020.1770315

The implementation of computer vision-based security governance is often shrouded in secrecy and sloppy oversight. Sara Pynttäri, who was my intern in 2023 did a short report on such technologies in Finland, Recognition technologies in Finnish Public Order.